Manufacturing enterprises running critical supplier and production systems cannot afford downtime, inconsistent deployments, or weak disaster recovery strategies.

When multiple business applications operate on traditional on-prem infrastructure, common challenges emerge:

- Slow, manual deployments

- No standardized CI/CD

- Limited scalability during production peaks

- Weak audit controls

- No structured disaster recovery strategy

In this post, I’ll walk through how we modernized a multi-application platform on AWS for a high-precision manufacturing enterprise by implementing:

- Standardized CI/CD across three business-critical applications

- Auto Scaling EC2 architecture

- Amazon RDS with automated backups

- Cross-region Disaster Recovery using AWS Elastic Disaster Recovery (DRS)

- IAM, WAF, KMS-based security controls

- Centralized monitoring and audit logging

The transformation improved release velocity, resilience, and compliance while reducing infrastructure costs by ~35%.

** For confidentiality reasons, specific client identifiers and sensitive implementation details have been generalized. The application names used in this blog—Supplier Portal, Tool Pulse, and Gauge Caliber—are representative placeholders, while the architecture, deployment patterns, and operational practices reflect the actual solution implemented.

The Technical Challenges

The enterprise operated three core applications:

- Supplier Portal

- Tool Pulse

- Gauge Caliber

Application Context

To better understand the architecture and workload characteristics, here is a brief overview of each application:

- Supplier Portal – A customer-facing application used by vendors for onboarding, order tracking, and supply chain coordination. This system experiences peak traffic during procurement cycles and requires high availability.

- Tool Pulse – An internal analytics and monitoring platform that provides real-time insights into manufacturing operations, equipment utilization, and production efficiency.

- Gauge Caliber – A quality assurance and calibration management system responsible for maintaining measurement accuracy, compliance records, and inspection workflows.

The limitations of the existing environment included:

1. Manual Deployment Model

- No CI/CD

- Human intervention required for releases

- High rollback risk

- Long release cycles

2. Limited Scalability

- Static infrastructure

- No auto-scaling

- Performance degradation during peak usage

3. Security & Compliance Gaps

- No fine-grained IAM controls

- Limited audit visibility

- No structured encryption controls

4. Disaster Recovery Risks

- No automated failover

- Manual backup processes

- High RTO and RPO exposure

5. Operational Overhead

- Physical server management

- Maintenance complexity

- High infrastructure cost

The objective was not just migration — it was to design a resilient, scalable, DevOps-driven, multi-application platform.

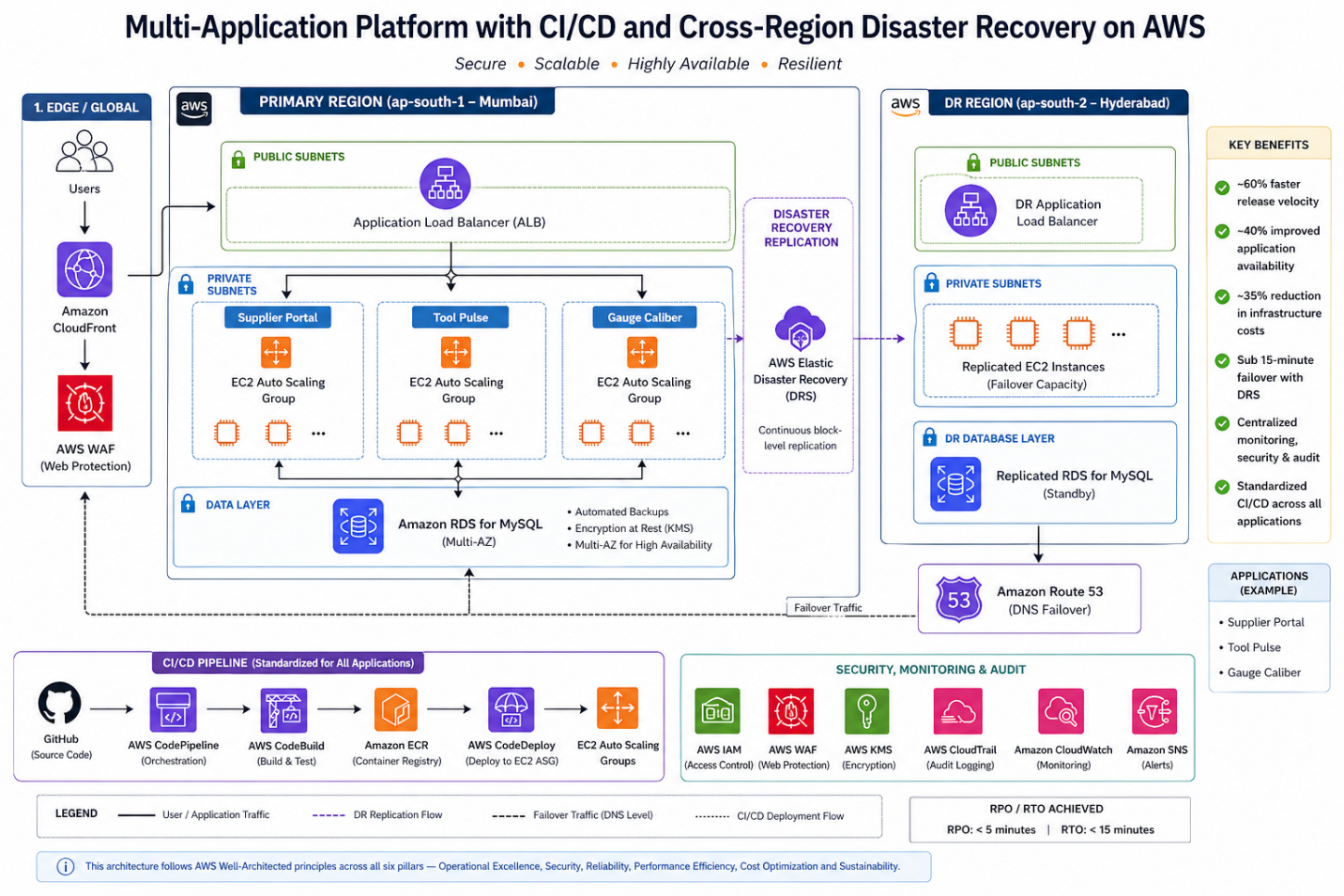

Solution Architecture Overview

All three applications were deployed using a standardized pattern:

Compute Layer

- Amazon EC2 instances

- Auto Scaling Groups

- Private subnets

- Application Load Balancers (ALB) for high availability

Database Layer

- Amazon RDS (MySQL)

- Automated backups enabled

- Multi-AZ deployment for availability

CI/CD Stack

- GitHub (source control)

- AWS CodePipeline

- AWS CodeBuild

- AWS CodeDeploy

Security Controls

- AWS IAM for role-based access

- AWS WAF for web protection

- Amazon CloudFront for secure content delivery

- AWS KMS for encryption

- AWS CloudTrail for audit logging

Monitoring & Alerting

- Amazon CloudWatch

- Amazon SNS for alert notifications

Disaster Recovery

- AWS Elastic Disaster Recovery (DRS)

- Secondary region replication (Hyderabad)

- Defined RPO/RTO alignment

Standardized CI/CD for All Applications

One of the most impactful design decisions was implementing a centralized CI/CD pipeline across all three applications.

Deployment Flow

- Code committed to GitHub

- CodePipeline triggers automatically

- CodeBuild:

- Compiles application

- Executes unit tests

- CodeDeploy:

- Deploys to staging

- Promotes to production EC2 Auto Scaling Group

This standardization ensured:

- Repeatable deployments

- Reduced human error

- Faster release cycles

- Controlled promotion across Dev → QA → Prod

Release velocity improved by ~60%.

appspec.yml — CodeDeploy EC2 Deployment

version: 0.0

os: linux

files:

- source: /

destination: /var/www/app

hooks:

BeforeInstall:

- location: scripts/stop_server.sh

timeout: 60

runas: root

AfterInstall:

- location: scripts/install_dependencies.sh

timeout: 120

runas: root

ApplicationStart:

- location: scripts/start_server.sh

timeout: 60

runas: root

ValidateService:

- location: scripts/validate_service.sh

timeout: 30

runas: root

The ValidateService hook is critical — it runs a health check after deployment. If it fails, CodeDeploy automatically rolls back. This is what gives you safe, repeatable deployments across all three applications without manual intervention.

Compute Architecture: EC2 + Auto Scaling

Instead of static instances, we deployed:

- EC2 instances in private subnets

- Application Load Balancer in public subnets

- Auto Scaling Groups for dynamic scaling

Why EC2 over Fargate in this case?

- Existing application dependencies required OS-level customization

- Tight integration with legacy libraries

- Gradual modernization strategy

Auto Scaling allowed:

- Dynamic scaling during supplier portal peaks

- ~40% improvement in application availability

- Cost-efficient compute during non-peak hours

Auto Scaling Policy — CLI Setup

# Create Auto Scaling Group for Supplier Portal

aws autoscaling create-auto-scaling-group \

--auto-scaling-group-name supplier-portal-asg \

--launch-template LaunchTemplateName=supplier-portal-lt,Version='$Latest' \

--min-size 2 \

--max-size 10 \

--desired-capacity 2 \

--vpc-zone-identifier "subnet-<private-subnet-1>,subnet-<private-subnet-2>" \

--target-group-arns arn:aws:elasticloadbalancing:ap-south-1:<account-id>:targetgroup/supplier-portal-tg/<id>

# Attach CPU-based scaling policy

aws autoscaling put-scaling-policy \

--auto-scaling-group-name supplier-portal-asg \

--policy-name cpu-target-tracking \

--policy-type TargetTrackingScaling \

--target-tracking-configuration '{

"PredefinedMetricSpecification": {

"PredefinedMetricType": "ASGAverageCPUUtilization"

},

"TargetValue": 60.0,

"ScaleInCooldown": 300,

"ScaleOutCooldown": 60

}'The same ASG pattern was applied consistently across all three applications — Tool Pulse and Gauge Caliber use identical configurations with their respective launch templates and target groups. The ScaleOutCooldown of 60 seconds ensures rapid scale-out during production peak cycles, while the 300-second ScaleInCooldown prevents aggressive scale-in that could cause instability.

Database Layer: Managed Resilience with Amazon RDS

All three applications used:

- Amazon RDS for MySQL

- Automated backups

- Multi-AZ failover

- Encryption at rest

Why RDS?

- Managed patching

- Built-in failover

- Reduced DBA overhead

- Consistent performance monitoring

This eliminated manual backup complexity and improved reliability.

Security by Design

Security controls were embedded across layers:

Identity & Access

- IAM role-based policies

- Least privilege access

Edge Security

- AWS WAF in front of ALB

- CloudFront for content delivery and protection

Encryption

- AWS KMS for data encryption

- Encrypted RDS storage

Audit & Compliance

- CloudTrail logging for:

- Deployment activities

- IAM changes

- Infrastructure updates

This strengthened ISO compliance readiness and audit traceability.

Disaster Recovery with AWS Elastic Disaster Recovery (DRS)

For a high-precision manufacturing enterprise, unplanned downtime is not just an IT problem — it is a production stoppage with direct revenue and contractual impact. This made structured, testable disaster recovery a non-negotiable part of the architecture, not an afterthought.

Previous State:

- Manual recovery with no documented runbook

- Hours of downtime during any infrastructure failure

- No cross-region failover capability

- Backup processes dependent on individual team members

New Design — How DRS Was Implemented:

AWS Elastic Disaster Recovery works by installing a lightweight replication agent on each source server. Once installed, the agent performs continuous block-level replication of the server’s disk to a staging area in the secondary region (Hyderabad — ap-south-2). This means the recovery environment is always within minutes of the production state, not hours.

The implementation followed three phases:

Phase 1 — Agent installation and initial sync The replication agent was installed on all source servers hosting the Supplier Portal, Tool Pulse, and Gauge Caliber applications. The initial full sync took approximately 4–6 hours per server depending on disk size. After the initial sync, replication is continuous and lightweight — typically under 5% of server CPU.

# Install the AWS Replication Agent on each source server (Linux)

wget -O ./aws-replication-installer-init.py \

https://aws-elastic-disaster-recovery-ap-south-1.s3.amazonaws.com/latest/linux/aws-replication-installer-init.py

sudo python3 aws-replication-installer-init.py \

--region ap-south-1 \

--aws-access-key-id <replication-user-access-key> \

--aws-secret-access-key <replication-user-secret-key> \

--no-promptReplication credentials are created once in the DRS console under Settings → Replication Credentials. Never use your primary IAM credentials here — create a dedicated replication IAM user with DRS-only permissions.

Phase 2 — Recovery settings configuration For each source server, recovery instance settings were configured in the DRS console:

- Instance type mapping — production EC2 type matched in the secondary region

- Subnet and security group assignment in ap-south-2

- Launch template for recovery instances pre-configured to avoid manual steps during actual failover

Phase 3 — DR drill validation Before going live, quarterly non-disruptive DR drills were run using the –is-drill true flag. This launches isolated recovery instances in a separate network — production traffic is unaffected. Each drill validated:

- Recovery instance launches successfully within the RTO window

- Application starts and passes health checks

- Database connectivity to the replicated RDS snapshot

- End-to-end smoke test via internal URL

# Launch a non-disruptive DR drill — isolated instances, no production impact

aws drs start-recovery \

--source-servers '[{"sourceServerID": "<source-server-id>"}]' \

--is-drill true \

--region ap-south-1

# Terminate drill instances once validated

aws drs terminate-recovery-instances \

--recovery-instance-ids '["<recovery-instance-id>"]' \

--region ap-south-1The –is-drill true flag is the most important detail here. Without it, start-recovery triggers an actual failover. The drill mode launches recovery instances in an isolated network — production traffic is completely unaffected. Always validate this in a non-production window before your first real DR event.

Defined RPO / RTO Thresholds:

| Metric | Target | Achieved |

|---|---|---|

| RPO (Recovery Point Objective) | ≤ 30 minutes | ~5 minutes (continuous replication) |

| RTO (Recovery Time Objective) | ≤ 1 hour | < 15 minutes (automated launch) |

The RPO achieved is significantly better than the target because DRS replicates at the block level continuously — unlike snapshot-based backups which capture state at fixed intervals.

Actual Failover Sequence (when triggered):

- Declare recovery event in DRS console or via CLI

- DRS launches pre-configured recovery instances in Hyderabad from the latest replicated state

- DNS records updated to route traffic to the secondary region

- Health checks validate application availability

- Team confirms normal operation — failover complete

Total steps 1–4 are automated. Step 5 is the only human-in-the-loop action.

Results:

- Failover time: hours of manual recovery → < 15 minutes automated

- Recovery testing: ad-hoc and untested → quarterly validated drills

- Business risk: unquantified → defined, documented, and insured

- Audit readiness: manual records → CloudTrail-logged failover events

A Presales Note on Selling DR

In Presales conversations, Disaster Recovery is the capability every enterprise says they want — and the first line item cut from the budget. The two objections I encounter most are: “We’ve never had a major outage” and “It sounds too complex to maintain.”

AWS Elastic DRS changed both conversations. On complexity: the agent installs in under 30 minutes per server and replication is fully managed — there is no DR infrastructure to maintain. On risk: the quarterly drill model lets customers see recovery happen before they need it. When a customer watches their application come up in a secondary region in 12 minutes during a drill, the budget conversation changes entirely.

For manufacturing enterprises specifically, the framing that resonates most is not technical — it is contractual. A single production stoppage that breaches an SLA with a Tier-1 customer costs more than the annual DRS bill. That is the business case, and it closes fast.

Monitoring & Operational Visibility

Operational visibility included:

Amazon CloudWatch

- EC2 metrics

- Auto Scaling activity

- RDS performance

- Application logs

Amazon SNS

- Alert notifications

- Incident escalation triggers

Combined with CloudTrail, the platform delivered:

- Proactive alerting

- Faster MTTR

- Audit-ready logging

Quantitative Outcomes

| Area | Result |

|---|---|

| Deployment Speed | ~60% faster releases |

| Scalability | ~40% improved availability at peak |

| Disaster Recovery | Failover < 15 minutes |

| Cost Optimization | ~35% infra cost reduction |

| Security | ISO-aligned IAM & encryption |

The biggest transformation was not technical alone — it was operational maturity.

Key Architectural Lessons

1. Standardized CI/CD Across Apps Increases Reliability

Consistency across applications reduces deployment variability.

2. Auto Scaling Is Essential for Manufacturing Workloads

Peak production cycles require elastic compute.

3. DR Must Be Designed, Not Assumed

AWS DRS provides structured, testable failover.

4. Security Must Span Identity, Network, and Data

IAM + WAF + KMS + CloudTrail create layered defense.

5. Managed Services Reduce Operational Burden

RDS and DRS significantly lowered infrastructure complexity.

Final Thoughts

Modernizing manufacturing applications is not about lifting servers into the cloud — it is about:

- Standardizing deployments

- Embedding security controls

- Designing for resilience

- Automating disaster recovery

- Scaling predictably

By implementing CI/CD pipelines, Auto Scaling EC2 architecture, RDS, and cross-region disaster recovery, we transformed a fragmented on-prem setup into a secure, resilient multi-application cloud platform.

For AWS practitioners, this case demonstrates how:

DevOps standardization + Managed Services + Structured DR = Enterprise-grade operational maturity.

Author

Rajat Jindal

VP – Presales

AeonX Digital Technology Limited